You Can't Change Your Face: Why Biometric Data Is a Permanent Security Risk

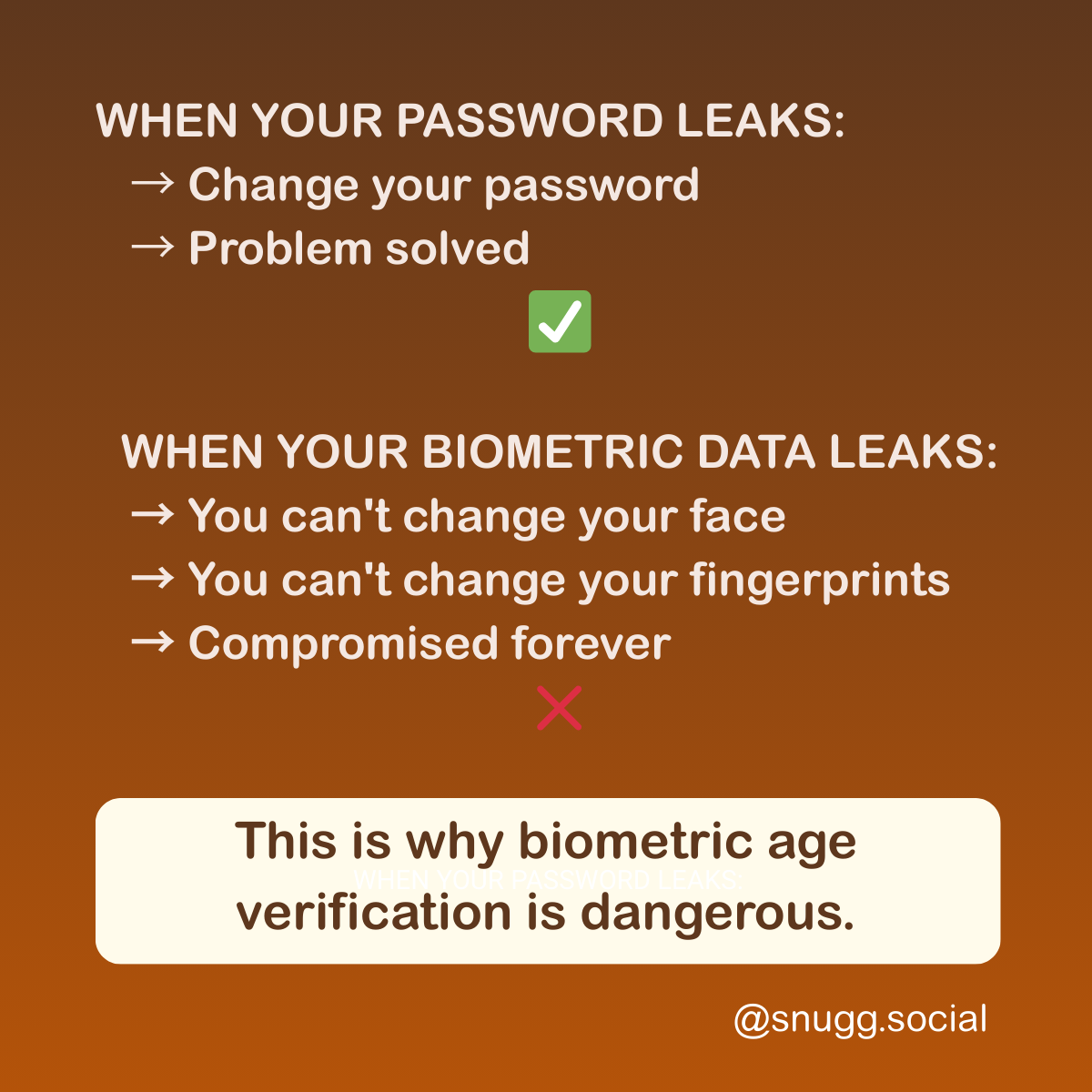

When your password leaks, you change it. When your biometric data is leaked, you're compromised forever.

I posted something on social media last week.

A simple message about biometric data. About what happens when passwords leak versus what happens when your face leaks.

Over 1,000 people shared it.

That's not normal for my posts. But this one hit something.

When your password leaks:

→ Change your password

>→ Problem solved

When your biometric data leaks:

→ You can't change your face

→ You can't change your fingerprints

→ The compromise is permanent

→ Forever in breach databases

It resonated because it's true.

But sharing a problem isn't enough. We need to understand what we're actually dealing with here.

The thing about faces

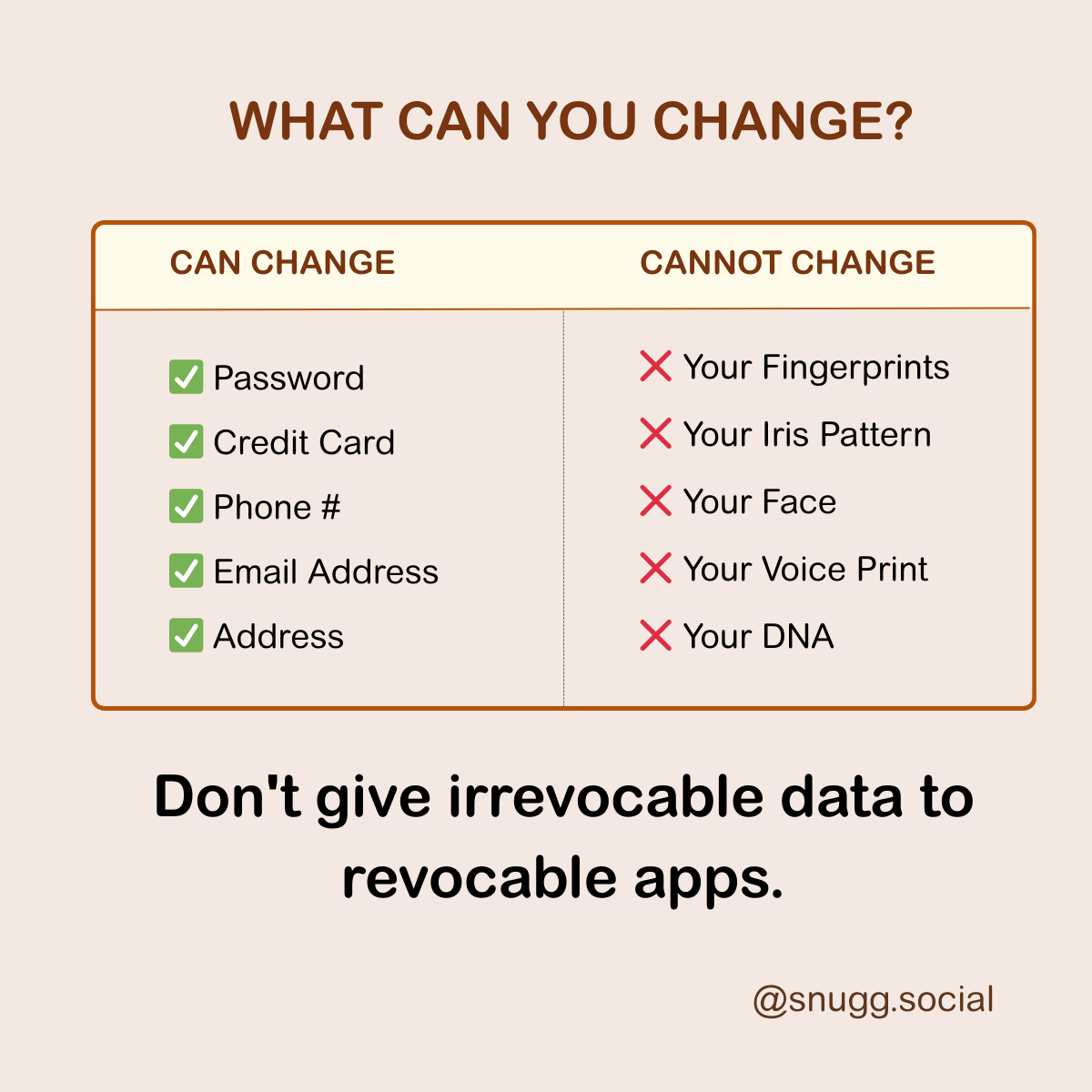

Think about every credential you use online.

Your password gets leaked? Annoying, but you change it. Problem solved.

Your credit card gets stolen? You cancel it, get a new one. Done.

Your email gets compromised? You create a new address. Move on.

Your phone number gets exposed? Get a new number. Sorted.

Even your home address—if it really becomes a problem, you can move.

But your face?

Your face is the one credential you can't change, rotate, or revoke.

It's you. Forever.

When a password database gets breached, everyone changes their passwords. When a biometric database gets breached, everyone keeps the same face.

Permanent exposure.

This is why mandatory facial recognition for age verification isn't just a privacy concern. It's a security catastrophe waiting to happen.

"But biometric databases are more secure"

Are they?

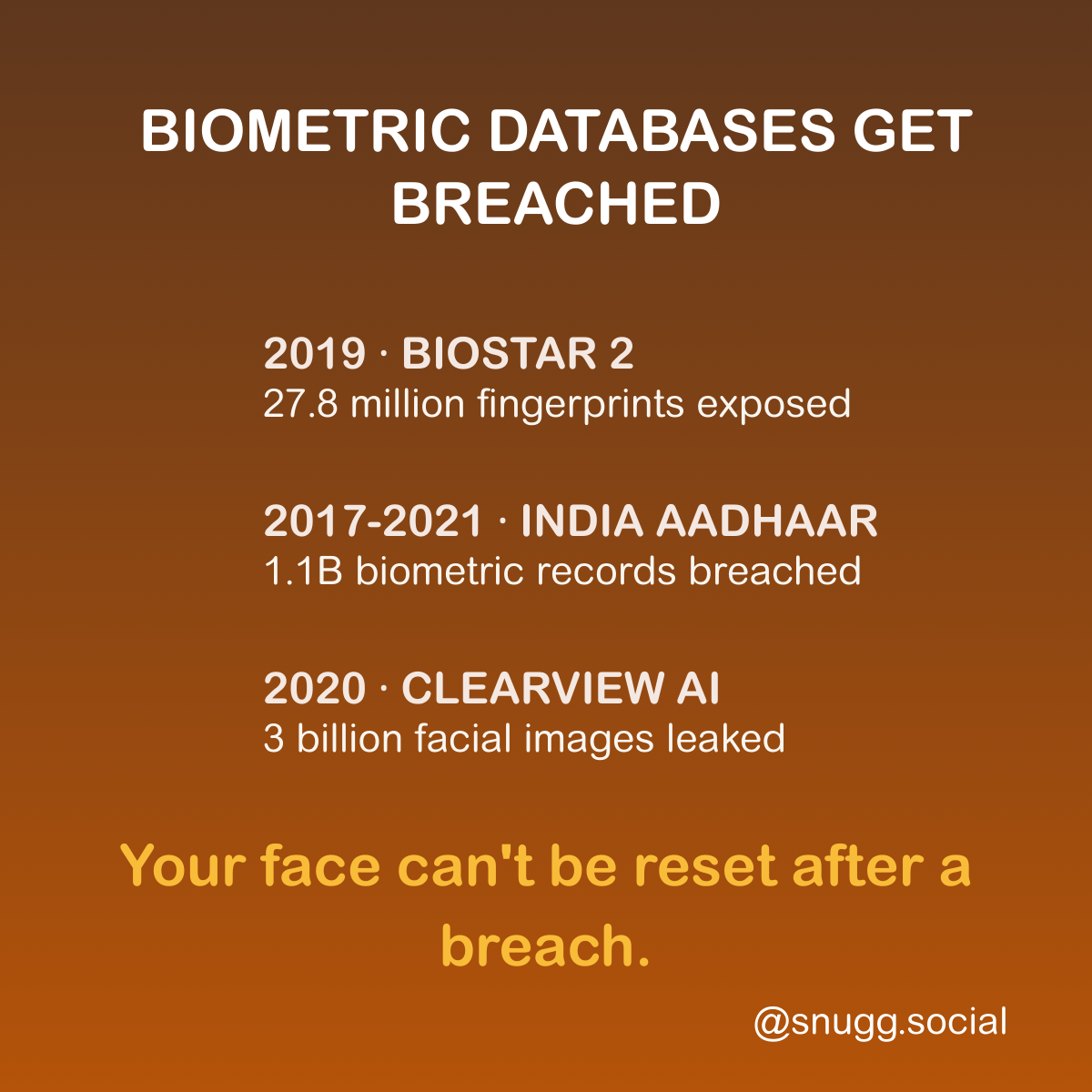

Let me tell you about BioStar 2.

In 2019, security researchers discovered an unencrypted database containing 27.8 million records. Fingerprints. Facial recognition data. Used by banks, police, the UK Metropolitan Police, defence contractors.

The fingerprints weren't hashed. They were stored as actual images. Images that could be copied.

The passwords? Stored in plaintext.

The researchers found they could "manipulate the URL search criteria in Elasticsearch" to access everything. That's it. That's how sophisticated the attack needed to be.

Then there's Clearview AI.

In 2020, hackers stole their entire client list. The FBI. Department of Homeland Security. ICE. Over 600 law enforcement agencies.

Clearview had scraped 3 billion facial images from social media. And then someone stole the list of everyone who was using that database.

Clearview's response? "Unfortunately, data breaches are part of life in the 21st century."

Senator Ed Markey said what we're all thinking: "If your password gets breached, you can change your password. If your credit card number gets breached, you can cancel your card. But you can't change biometric information like your facial characteristics if a company like Clearview fails to keep that data secure."

India's Aadhaar system is the world's largest biometric database. 1.1 billion fingerprints and iris scans. It's been breached multiple times over several years.

The World Economic Forum's Global Risks Report 2019 called it "the largest data breach in the world."

In 2023, 815 million records appeared for sale on dark web forums. The asking price? $80,000.

The FBI has 641 million face images in its facial recognition database. Most were collected for non-criminal purposes—driver's licences, passports. The FBI refuses to assess the accuracy of its own systems. No warrant required for searches.

Do you see the pattern?

A database gets created "for security" or "for verification." It grows. Mission creep adds more use cases. And eventually, it gets breached.

The question isn't IF biometric databases get breached. The question is WHEN. And how bad the damage is when it happens.

With passwords, the damage is temporary. Change your password, problem solved.

With biometric data, the damage is permanent. You can't change your face.

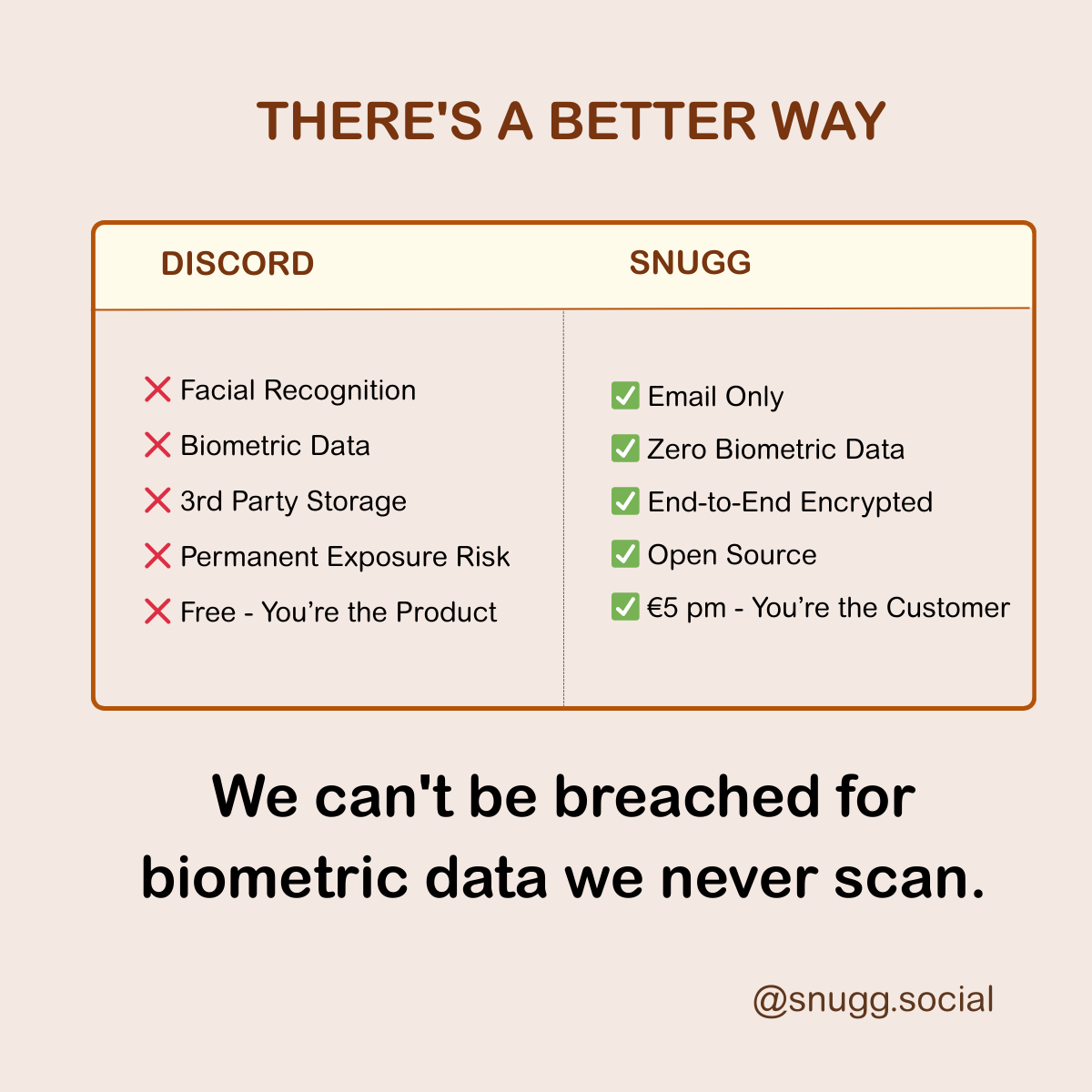

What Discord is actually asking

Discord's announcement sounds reasonable on the surface.

"To protect teens, users must verify their age using facial estimation."

Let me translate what they're actually asking for.

They want your face. An irrevocable biometric identifier. Scanned by a third-party company called Yoti. For a binary check—over 18 or not. "Processed briefly and then deleted," they say.

What do you get in return?

Continued use of a chat app.

What do you risk?

Biometric exposure if anything goes wrong. Third-party access to your facial data—you're not just trusting Discord, you're trusting Yoti. No recourse if the data is mishandled, because you can't change your face. And perhaps most importantly: you're normalising the practice. Accepting that this is a reasonable trade.

Here's the context that makes this particularly concerning.

This is the same company whose age verification vendor got hacked in September 2025. The breach exposed 70,000 government IDs. Five months later, they're rolling out global facial verification.

Discord claims "these vendors were not involved" in that breach. But they're now using multiple vendors. We don't know which ones. We don't know their security track record.

You're providing biometric identifiers to use a gaming chat app.

The risk/reward ratio doesn't make sense.

Why "anonymised" doesn't mean safe

Discord's defence sounds reassuring.

"The facial data is processed on-device and never stored."

Let's examine what that actually means.

Your face gets scanned. Facial features get extracted—geometry, distances, patterns. An algorithm estimates your age. Discord says the image is deleted.

But here's what they're not telling you.

The process still extracts biometric features. Even if the original image is deleted, features were extracted and processed. Research demonstrates that facial embeddings—mathematical representations of your face—can be used to reconstruct recognisable faces.

"On-device processing" isn't always on-device. Discord states the video selfie is either processed on your device OR "processed briefly by Yoti and then deleted."

That "or" matters.

Recent research is concerning. Studies demonstrate "reconstructed faces can be used for accessing other real-world face recognition systems." A comprehensive survey on inverse biometrics found reconstruction techniques have been "applied to fingerprints, iris, handshape, face, and handwriting biometrics."

The assumption that "biometric templates do not contain enough information to be reverse engineered" is increasingly outdated.

You have to trust the implementation. How strong is the deletion? Is metadata stored alongside? What happens during transmission? The code is proprietary—you can't audit it.

Breaches happen at every stage. It's not just about final storage. Data can be intercepted during capture. During transmission. During processing. Before deletion completes.

A 2024 report found scores of vulnerabilities in biometric systems, including one where attackers could "access and remotely alter the machine's biometric database" and "upload their own face to the system."

The precedent problem

"It's just age verification. What's the big deal?"

The big deal is precedent.

We've seen this pattern before.

First, there's a reasonable justification. "We need to verify age to protect children." Who's going to argue with that?

Then, limited scope. "Only for age verification." "Only with your consent."

Then, scope expansion. "Also for identity verification." "Also for payment verification." "Also for content access."

Then, universal requirement. "To comply with regulations..." "For security reasons..." "All accounts must verify..."

And finally, normalised surveillance. Facial recognition is expected. Every platform requires it. You can't participate without submitting.

We've seen this pattern with phone numbers. Started as "optional recovery." Now required for most platforms.

We've seen it with real names. Started as a Facebook experiment. Now a standard expectation.

We've seen it with location access. Started as an "optional feature." Now many apps won't work without it.

Biometric data is the final frontier.

Once we normalise facial scans for "age verification," we've accepted that platforms can require irrevocable biometric credentials. That third parties can process our facial data. That convenience justifies permanent security risks.

If we accept it here, every platform will follow.

What you can do

You have options.

Don't verify. Keep your biometric privacy. No permanent exposure risk. You send a market signal—low verification rates mean bad policy. You may lose access to some Discord features. But if you can afford to lose Discord access, this is the safest option.

Verify with facial scan. Full Discord access maintained. But your face is processed by third parties. You've normalised the practice. Unknown long-term implications. Only consider this if Discord is critical infrastructure for you AND you understand the risks.

Leave Discord. Complete privacy protection. Vote with your feet—the most powerful market signal. You need to migrate communities and learn new platforms. But if it's viable, this is the principled choice.

Alternatives to Discord

If you're considering leaving, privacy-respecting alternatives exist.

For communities and groups, I'd recommend Matrix / Element. End-to-end encrypted messages and voice/video. Decentralised—no single company controls it. Open source, so the code is auditable. There's even a bridge to Discord for transitioning. No biometric data required. Free.

Revolt has a Discord-like interface if you want something familiar. Privacy-focused. Self-hostable. No biometric requirements. Also free.

For private messaging, Signal remains the gold standard. End-to-end encrypted including metadata. Open source. No biometric data. Groups up to 1,000 people.

Session is like Signal but decentralised. No phone number required. Onion-routed for an extra privacy layer.

For social communities, Mastodon offers a decentralised social network. No surveillance. Choose your server or run your own. No biometric data required.

Why I'm building Snugg

1,000 people shared my message about biometric data.

That taught me something important. People know surveillance is a problem. They don't know solutions exist.

So let me be explicit about what we're building.

Snugg will never use facial recognition. Not now, not ever. No fingerprint scans. No iris scans. No voiceprints. No biometric data of any kind.

What we do use: email only—revocable if compromised. Optional username—pseudonymous by default. No phone number. No real name unless you choose to share it.

Why can we do this?

Because of our business model.

Free platforms need advertising. Advertising needs surveillance to target ads. Surveillance needs identity verification. Identity verification leads to biometric requirements.

Snugg costs €5 per month. We serve users, not advertisers. No need for surveillance. No need for irrevocable identity.

We chose a business model that doesn't require your biometric data.

We will never collect biometric data. Not for verification. Not for security. Not for features. Not for regulations. Not ever.

We can't abuse data we don't collect. Can't breach biometrics we never scan. Can't sell metadata we never capture. Can't train AI on data we don't have.

You're the customer, not the product. Subscription model means we serve you. No advertising means no surveillance incentive. No data sales means your data stays yours.

Open source means auditable. Code will be public. You can verify our promises. Community can inspect for backdoors.

The waitlist is open now. We launch in March 2026. The first 500 get a lifetime 40% discount—€3 per month forever.

The choice

Mandatory facial recognition isn't just a platform problem.

It's a precedent.

If we accept that chat apps can require irrevocable biometric identifiers, we've accepted that all platforms can.

Your password leaks—you change it. Your biometric data is leaked—you're compromised. Forever.

The trade-off doesn't make sense.

I'm building the alternative. Social media that never needs to see your face.

Because we can't be breached for biometric data we never collect.

Launch: March 2026 | First 500: Lifetime 40% discount

Related Reading

- Discord Wants to Scan Your Face. Here's Why I'm Worried. – Our detailed analysis of Discord's specific announcement

- Platform Comparison: Which Apps Actually Protect Privacy

- Free Doesn't Mean No Cost: The Real Price of Social Media

Sources & Further Reading

Biometric Data Breaches:

- BioStar 2 Database Breach - The Register - August 2019

- BioStar 2 Exposes 28 Million Records - Avast - August 2019

- Clearview AI Entire Client List Stolen - CNN - February 2020

- Clearview AI Data Breach - WeLiveSecurity - February 2020

- Aadhaar Data Breach Analysis - Huntress

- Aadhaar Security Fails - Privacy International

- Indian Aadhaar IDs on Dark Web - Resecurity - October 2023

- FBI Facial Recognition: GAO Report - June 2019

- FBI Can Search 400 Million Face Recognition Photos - EFF - June 2016

Biometric Hash Vulnerabilities:

- Face Reconstruction from Facial Embeddings - arXiv - 2026

- Face Reconstruction Using Adapter Models - arXiv - November 2024

- Reversing the Irreversible: Survey on Inverse Biometrics - arXiv - January 2024

Discord Age Verification:

- Discord Teen-by-Default Settings Announcement - February 2026

- Discord Age Assurance FAQ - Discord Support

- How to Complete Age Assurance on Discord - Discord Support

- Discord Data Breach: 5CA Vendor Hack - Bitdefender - October 2025

Major Data Breaches (Context):

- Equifax Data Breach Settlement - FTC - 147.9 million records

- 2017 Equifax Data Breach - Wikipedia

- Yahoo 3 Billion Accounts Breach - BreachSense

About the Author

I'm a yacht surveyor based in the Caribbean and the founder of Snugg. After 15 years watching social media platforms prioritise profits over privacy, I decided to build the alternative. I'm not a privacy activist—just someone who thinks you shouldn't need to scan your face to chat with friends.

Connect: Twitter/X | LinkedIn | Email

About Snugg: I'm building the social media platform I wish existed. No ads. No tracking. No algorithms. No surveillance. Just you, your friends, and actual control over your digital life. Learn more

If this resonated with you, please share it. The more people who understand what's happening, the harder it is to normalise.